-

LDoubleZhi

发布在 社区求助区(SOS!!) • 阅读更多是否直接修改yaml里的backbone_pretrained: 'checkpoints/mobilenet_v2.pth'就可以只当模型文件,再args.do_test=True就可以了?

-

-

-

LDoubleZhi

发布在 社区求助区(SOS!!) • 阅读更多[Epoch 0/150][Iter 0/86][lr 0.000000][Loss: anchor 9.16, iou 8.31, l1 35.46, conf 99672.05, cls 16.66, imgsize 608, time: 26.08]

[Epoch 0/150][Iter 10/86][lr 0.000000][Loss: anchor 18.68, iou 18.18, l1 101.71, conf 2369.89, cls 39.56, imgsize 608, time: 39.37]

[Epoch 0/150][Iter 20/86][lr 0.000003][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 416, time: 45.88]

[Epoch 0/150][Iter 30/86][lr 0.000015][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 352, time: 26.93]

[Epoch 0/150][Iter 40/86][lr 0.000047][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 384, time: 24.05]

[Epoch 0/150][Iter 50/86][lr 0.000114][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 480, time: 30.52]

[Epoch 0/150][Iter 60/86][lr 0.000237][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 576, time: 39.93]

[Epoch 0/150][Iter 70/86][lr 0.000439][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 480, time: 32.17]

[Epoch 0/150][Iter 80/86][lr 0.000749][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 448, time: 36.69]

[Epoch 1/150][Iter 0/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 352, time: 23.47]

[Epoch 1/150][Iter 10/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 352, time: 15.24]

[Epoch 1/150][Iter 20/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 512, time: 34.18]

[Epoch 1/150][Iter 30/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 544, time: 48.47]

[Epoch 1/150][Iter 40/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 416, time: 28.83]

[Epoch 1/150][Iter 50/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 416, time: 19.87]

[Epoch 1/150][Iter 60/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 352, time: 18.90]

[Epoch 1/150][Iter 70/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 576, time: 19.08]

[Epoch 1/150][Iter 80/86][lr 0.001000][Loss: anchor nan, iou nan, l1 nan, conf nan, cls nan, imgsize 608, time: 34.87] -

-

-

-

-

-

LDoubleZhi

发布在 社区求助区(SOS!!) • 阅读更多@金天 我好像找到原因了,demo_det_r010_custom.py里heads = {'hm': 5, 'reg': 2, 'wh': 2}中的hm要根据自己的类设置,哭了,搞了一周

-

-

LDoubleZhi

发布在 社区求助区(SOS!!) • 阅读更多@金天 问题汇总一下:1.是否需要像原作者一样把torch中的bn disable 2.奇异ai的工程主要改了哪个python文件,如果想把作者的最新代码更新到里面时不能覆盖那些文件。 一样的数据集一样的超参数用奇异ai的训练效果太差啊

-

LDoubleZhi

发布在 社区求助区(SOS!!) • 阅读更多@金天 我晚上完全把opts debugger coco_custom.py和原项目匹配一下(超参数,和类别设置),训练看看结果,要是还有误检就说明代码有问题,同样的数据集我用xingyizhou的代码训练的模型效果很好

-

-

-

-

LDoubleZhi

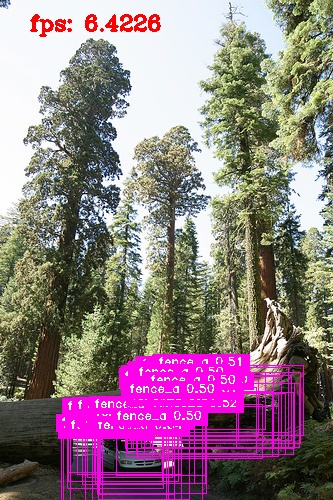

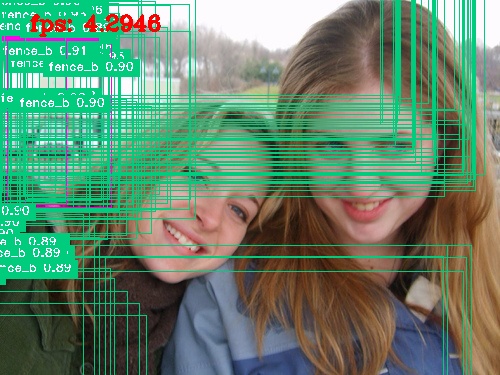

发布在 社区求助区(SOS!!) • 阅读更多(图片

地址)

地址)train: [88][81/82]|Tot: 0:03:23 |ETA: 0:00:03 |loss 0.8437 |hm_loss 0.4320 |wh_loss 1.9508 |off_loss 0.2166 |Data 0.020s(0.035s) |Net 2.479s

ctdet/default |################################| train: [89][81/82]|Tot: 0:03:22 |ETA: 0:00:03 |loss 0.8449 |hm_loss 0.4285 |wh_loss 1.9778 |off_loss 0.2186 |Data 0.020s(0.033s) |Net 2.475s

ctdet/default |################################| train: [90][81/82]|Tot: 0:03:23 |ETA: 0:00:03 |loss 0.8749 |hm_loss 0.4565 |wh_loss 2.0108 |off_loss 0.2173 |Data 0.020s(0.034s) |Net 2.476s

ctdet/default |################################| val: [90][296/297]|Tot: 0:00:26 |ETA: 0:00:01 |loss 1.3022 |hm_loss 0.8241 |wh_loss 2.6082 |off_loss 0.2173 |Data 0.000s(0.001s) |Net 0.089s

model_90.pth is saved!loss降到1以下了,但检测结果非常差

-